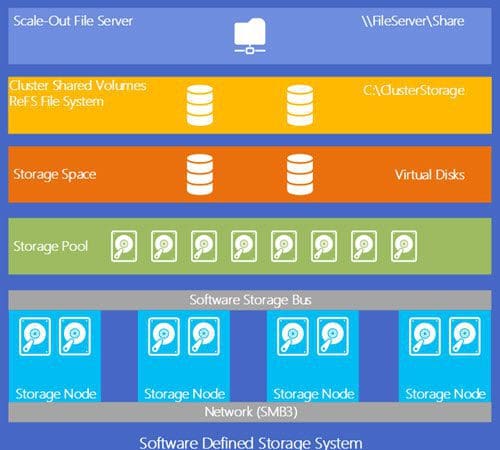

Storage Spaces Direct Basics

Storage Spaces Direct Basics Like anything else, I’m going to start with the basics of the stack and then dive into details of each component over the next few blog posts. There’s a lot to digest…So let’s get rolling…

Like anything else, I’m going to start with the basics of the stack and then dive into details of each component over the next few blog posts. There’s a lot to digest…So let’s get rolling…

Storage Spaces Direct Basics – Explained

Reply