Building a Hyper-V failover plan is a critical step for any organization wishing to maintain 24/7/365 availability of their Microsoft Cloud. However, configuring Hyper-V failover clusters and managing Hyper-V hosts has its own set of unique challenges, nuances and steps. This article provides an introduction to Hyper-V failover challenges, discusses its benefits and shares some resources where virtualization administrators can access more information. Continue reading

Building a Hyper-V failover plan is a critical step for any organization wishing to maintain 24/7/365 availability of their Microsoft Cloud. However, configuring Hyper-V failover clusters and managing Hyper-V hosts has its own set of unique challenges, nuances and steps. This article provides an introduction to Hyper-V failover challenges, discusses its benefits and shares some resources where virtualization administrators can access more information. Continue reading

Tag Archives: Cluster Architecture

Azure vs. AWS: Determine Which You Need

Looking to take your on-premise data center or private cloud to the next level? Maybe you’re looking for a better way to allocate your infrastructure resources based on changing workload requirements. Or maybe you want to extend your data backup and recovery capabilities, or need a resiliency solution that is more cost-effective than the system you’re currently using.

Whatever your reasons, migrating to the public cloud has lots of advantages, including substantial cost savings, elasticity, and easy scalability. If you’re ready to take the next step, the question to consider is this: What cloud service should you go with? Continue reading

Building Nutanix Ready…What does it mean to be “Ready”?

Storage Spaces Direct Explained – Applications & Performance

Applications

Microsoft SQL Server product group announced that SQL Server, either virtual or bare metal, is fully supported on Storage Spaces Direct. The Exchange Team did not have a clear endorsement for Exchange on S2D and clearly still prefers that Exchange is deployed on physical servers with local JBODs using Exchange Database Availability Groups or that customers simply move to O365.

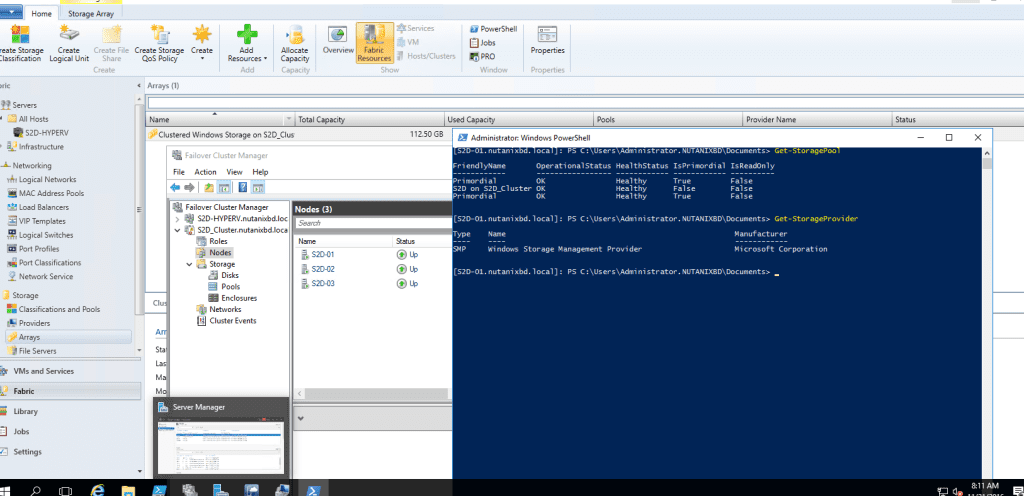

Storage Spaces Direct Explained – Management & Operations

Good day everyone. It been a few weeks, like busy with work and such. Anyways, this post will go into how Management & Operations are done in S2D. Now, my biggest pet peeve is complex GUI management and yet again, Microsoft doesn’t disappoint. It still a number of steps in different interfaces to bring up S2D, Check out Aidan Finns blog post on disaggregated management from last year. It still rings true to this day with the release of 2016. It shouldn’t be this complex IMO 🙁 That being said, let move to the details.

Continue reading

Continue reading

Storage Spaces Direct Explained – Storage QOS & Networking

Yo everyone…This is going to be a short blog post in this series. I am just covering Networking and Storage QoS as it pertains to S2D. There are the technologies the bind S2D together.

Storage QoS

S2D is using the Storage (QoS) Quality of Service that ships with Windows Server 2016 which provides standard min/max IOPS and bandwidth control. QoS policy can be applied at the VHD, VM, Groups of VMs, or Tenant Level. Benefits include:

- Mitigate noisy neighbor issues. By default, Storage QoS ensures that a single virtual machine cannot consume all storage resources and starve other virtual machines of storage bandwidth.

- Monitor end to end storage performance. As soon as virtual machines stored on a Scale-Out File Server are started, their performance is monitored. Performance details of all running virtual machines and the configuration of the Scale-Out File Server cluster can be viewed from a single location

- Manage Storage I/O per workload business needs Storage QoS policies define performance minimums and maximums for virtual machines and ensures that they are met. This provides consistent performance to virtual machines, even in dense and overprovisioned environments. If policies cannot be met, alerts are available to track when VMs are out of policy or have invalid policies assigned.

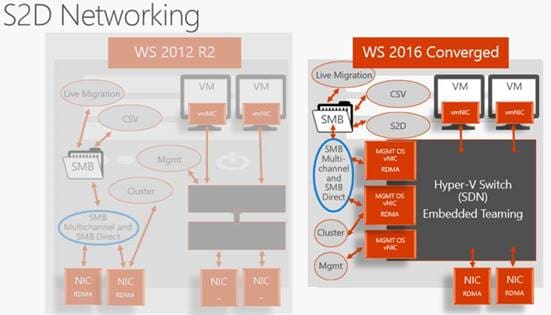

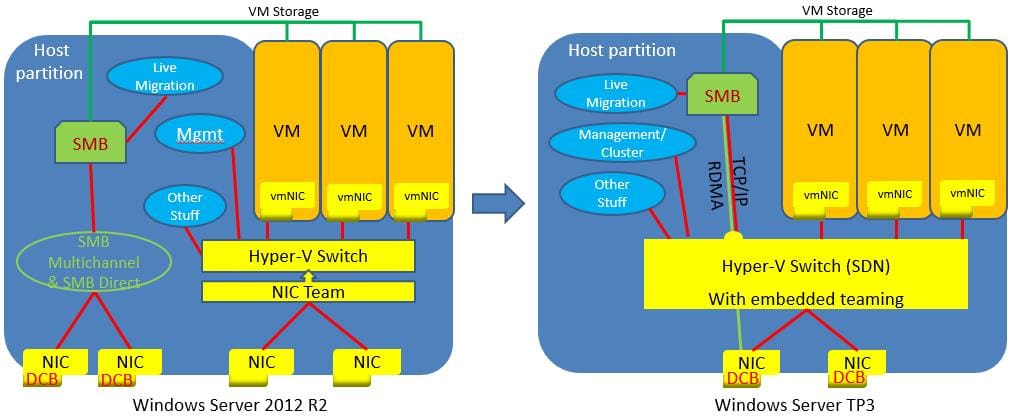

What’s New in Networking with S2D?

What’s New in Networking with S2D?

In Windows Server 2016, they added Remote Direct Memory Access (RDMA) support to the Hyper-V virtual switch.

For those that don’t know what RMDA is it technology that allows direct memory access from one computer to another, bypassing TCP layer, CPU , OS layer and driver layer. Allowing for low latency and high-throughput connections. This is done with hardware transport offloads on network adapters that support RDMA.

Back to Hyper-V virtual switch support for RDMA. This allows you to configure regular or RDMA enabled vNICs on top of a pair of RDMA capable physical NICs. They also added embedded NIC teaming or Switch Embedded Teaming (SET).

SET is where NIC teaming and the Hyper-V switch is a single entity and can now be used in conjunction with RDMA NICs, wherein Windows 2012 Server you needed to have separate NIC teams for RDMA and Hyper-V Switch.

The images below illustrates the architecture changes between Windows Server 2012 R2 and Windows Server 2016.

Next up…Management and Operations…

Next up…Management and Operations…

Until next time, Rob

CPS Standard on Nutanix Released

Microsoft Exchange Best Practices on Nutanix

To continue on my last blog post on Exchange...

As I mentioned previously, I support SE’s from all over the world. And again today, I got asked what are the best practices for running Exchange on Nutanix. Funny enough, this question comes in quite often. Well, I am going to help resolve that. There’s a lot of great info out there, especially from my friend Josh Odgers, which has been leading the charge on this for a long time. Some of his posts can be controversial, but the truth is always there. He’s getting a point across.