Many people in the IT consider containers, a technology used to isolate applications with their own environment, to be the future.

However, serverless geeks think that containers will gradually fade away. They will exist as a low-level implementation detail bubbling below the surface but most software developers will not have to deal with them directly. It may seem premature to declare victory for serverless just yet but there are enough positive signs already. Forward-thinking organizations like iRobot, Coca-Cola, Thomson Reuters, and Autodesk are experimenting and adopting serverless technologies. All major and minor Cloud providers — including the aforementioned ones as well as players like Azure, AWS, GCP, IBM, Oracle, and Pivotal are working on serverless offerings. If you wan to learn more just take a quick look to this link, https://docs.microsoft.com/en-us/archive/blogs/wincat/validating-hybrid-cloud-scenarios-in-the-server-2012-technology-adoption-program-tap.

Together with the major players, a whole ecosystem of startups is emerging. These startups attempt to solve problems around deployment and observability, provide new security solutions, and help enterprises evolve their systems and architectures to take advantage of serverless. This isn’t, of course, to mention a vibrant community of enthusiasts who contribute to serverless open source projects, evangelize at conferences and online, and promote ideas within their organizations.

It would be great to close the book now and declare victory for the serverless camp, but the reality is different. There are challenges that the community and vendors are yet to solve. These challenges are cultural and technological; there’s tribal friction within the tech community; inertia to adoption within organizations, and issues around some of the technology itself. Also remember to make sure that you are properly certified if you are running cloud-based services, it’s the ISO 27017 certificate that you need for that.

Confusion and the Cloud

While adoption of serverless is growing, more work needs to be done by the serverless community to communicate what this technology is all about. The community needs to bring more people in and explain how serverless adds value. It’s inarguable that there are good questions from members of the tech community. These can range from trivial disagreements over “serverless” as a name, to more philosophical arguments about fit, use-case, and lock-in. This as a perfectly normal example of past successes (with other technologies) breeding inertia to change.

This isn’t to say that those who have objections are wrong. Serverless in its current incarnation isn’t suitable in all cases. There are limitations on how long functions can run, tooling is immature and monitoring distributed applications made up of a lot of functions and cloud services can be difficult (although some progress is being made to address this).

There’s also a need for a robust set of example patterns and architectures. After all, the best way to convince someone of the merit of technology is to build something with it and then show them how it was done.

Confusingly, there is a tendency by some vendors to label their offerings as serverless when they aren’t. This makes it look like they are jumping on the bandwagon rather than thoughtfully building services that adhere to serverless principles. Some of the bigger cloud vendors are guilty of this and unfortunately, this confuses people’s understanding of technology.

Go Big or Go Home

At the very large end of the scale, companies like Netflix and Uber are building their own internal serverless-like platforms. But unless you are the size of Netflix or Uber, building your own Function as a service (FaaS) platform from scratch is a terrible idea. Think of it this way like this, its like building a toaster yourself rather than buying a commoditized, off-the-shelf product. Interestingly, Google recently released a product called kNative. This product — based on the open source Kubernetes container orchestration software— is designed to help build, deploy and manage serverless workloads on your own servers.

For example, Google’s Bret McGowen, at Serverlessconf San Francisco ’18, gave of a real-life customer scenario out on an oil rig in the middle of an ocean with poor Internet connectivity. The customer needed to perform computation with terabytes of telemetry data but uploading it to a cloud platform over a connection equivalent to a 3G modem wasn’t feasible. “They cannot use cloud and it’s totally unfair to say — sorry buddy, hosted functions-as-a-service or bust — their developers deserve to have the same serverless experience as the rest of us” was Bret’s explanation why, in this case, running kNative locally on the oil rig made sense.

He is, of course, correct. Having a serverless system running in your own environment — when you cannot use a cloud platform — is better than nothing. However, for most of us, serverless solutions like Google Cloud Functions, Azure Functions, or AWS Lambda offer a far smaller barrier to entry and remove many administrative headaches. It’s fair to say that most companies should look at serverless solutions like Lambda first and if they don’t satisfy requirements look at other alternatives, like kNative and containers, second.

The Future…in my humble opinion

It’s likely that some of the major limitations with serverless functions are going to be solved in the coming years, if not months. Cloud vendors will allow functions to run for longer, support more languages, and allow deeper customizations. A lot of work is being done by cloud vendors to allow developers to bring their own containers to a hosted environment and then have those containers seamlessly managed by the platform alongside regular functions.

In the end, “do you have a choice?” “No, none, whatsoever” was Bret’s succinct, brutal answer at the conference. Existing limitations will be solved and serverless compute technologies will herald the rise of new, emerging architectural patterns and practices. We are yet to see what these are but, this is the future and it is unavoidable.

Cloud computing is where we are, and where the world is going for the next decade or two. After that, probably something new will come along.

But the reasons for going to cloud computing in general and the inevitable wind-down of on-premises to niche special functions are now pretty obvious.

- Security – Big cloud operators have FAR more security people and capacity than even a big enterprise, and your own disgruntled employees don’t have the keys to the servers.

- Cost-effectiveness – Economies of scale. The rule of big numbers.

- Zero capital outlay – reduced costs.

- For software developers, no more software piracy. That’s a big saving on the cost of developing software, especially for sales in certain countries.

- Compliance – So much easier if your cloud vendor is fully certified, so you only have to worry about your part of the puzzle.

- Energy efficiency – Big, well-designed datacentres use a LOT less global resources.

My next post in this series will be on “The Past and On-prem and the Cloud?

Until next time, Rob

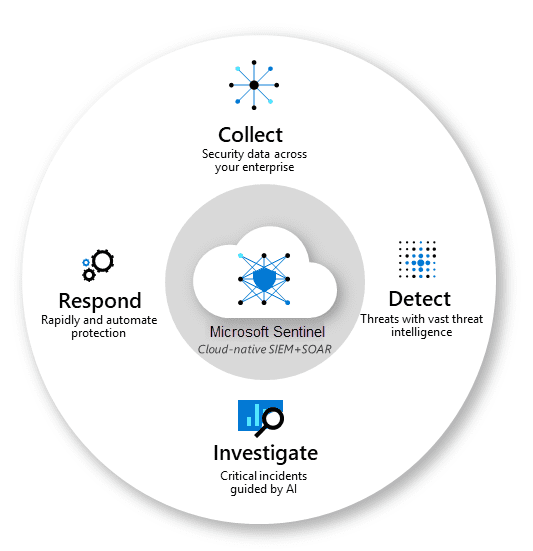

In today’s digital world, protecting an organization’s information and assets from cyber threats has never been more critical. The rise in cyber attacks and security breaches has made it crucial for organizations to have a centralized platform to manage their security operations and respond to incidents promptly and effectively. That’s where Azure Sentinel comes in.

In today’s digital world, protecting an organization’s information and assets from cyber threats has never been more critical. The rise in cyber attacks and security breaches has made it crucial for organizations to have a centralized platform to manage their security operations and respond to incidents promptly and effectively. That’s where Azure Sentinel comes in.