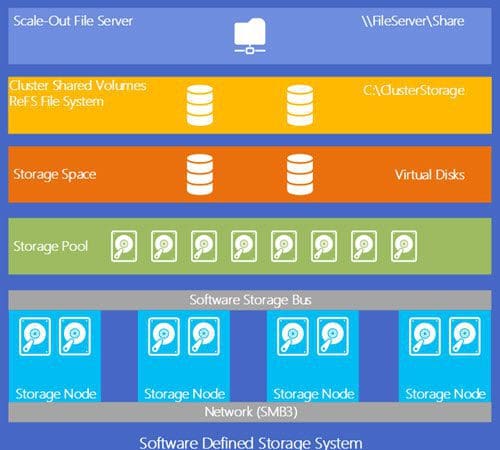

Storage Spaces Direct Basics

Storage Spaces Direct Basics Like anything else, I’m going to start with the basics of the stack and then dive into details of each component over the next few blog posts. There’s a lot to digest…So let’s get rolling…

Like anything else, I’m going to start with the basics of the stack and then dive into details of each component over the next few blog posts. There’s a lot to digest…So let’s get rolling…

Monthly Archives: October 2016

The Evolution of S2D

The intention of this blog post series is to give some history of how Microsoft Storage Spaces evolved to what it has become known today as Storages Spaces Direct (S2D). This first blog post will go into the history of Storage Spaces. Over my next few posts, I will delve further into the recent Storage Spaces Direct release with Windows 2016 server. l will conclude my series with where I think it’s headed and how it compares to other HCI solutions in general. Now let’s go for a ride down memory lane….

Ignite 2016 highlights and pics….

Well, I’m fresh back from Microsoft Ignite 2016. It was a busy week of attending sessions and booth duty for Nutanix :). Here is a summary of highlights and pics from the conference. I plan on diving deep into each of the major highlights over the coming weeksmonths. My first blog post will be on Storage Spaces Direct and its evolution. On to the updates…